Medical schools sit at the crossroads of patient care, research, and education. Faculty often juggle multiple roles at once: clinician, researcher, teacher, mentor, administrator, and leader. Yet many institutions still rely on fragmented systems and scattered documentation when conducting medical school faculty assessment.

This results in reviews that don’t tell the full story. Promotion decisions that drag on. Accreditation reviews that require last-minute data assembly. Holistic assessment is not simply a mindset shift. It requires the right infrastructure.

Leaders must rethink how data systems support the assessment and evaluation of faculty performance. Without centralized, structured visibility across defined domains, institutions risk subjective reviews, inefficiencies in tenure and promotion evaluation, and exposure during accreditation.

Below is a practical checklist of the seven essential data areas every medical school should track to enable a more complete and credible approach to faculty assessment.

Why holistic faculty assessment is now a leadership imperative

Medical schools are under increasing pressure from accrediting bodies, healthcare partners, and governing boards to show accountability, transparency, and measurable results.

At the same time:

- Faculty roles are more specialized than ever

- Clinical productivity demands continue to rise

- Team-based research and interprofessional education are expanding

- Expectations around transparency and consistency are higher

In this environment, leadership can’t rely on narrow productivity metrics or informal reporting to guide the assessment and evaluation of faculty performance.

A more complete approach is necessary to:

- Support consistency across tracks and appointment types

- Align advancement and compensation with institutional priorities

- Maintain accreditation confidence

- Reduce bias in review processes

- Strengthen faculty engagement and retention

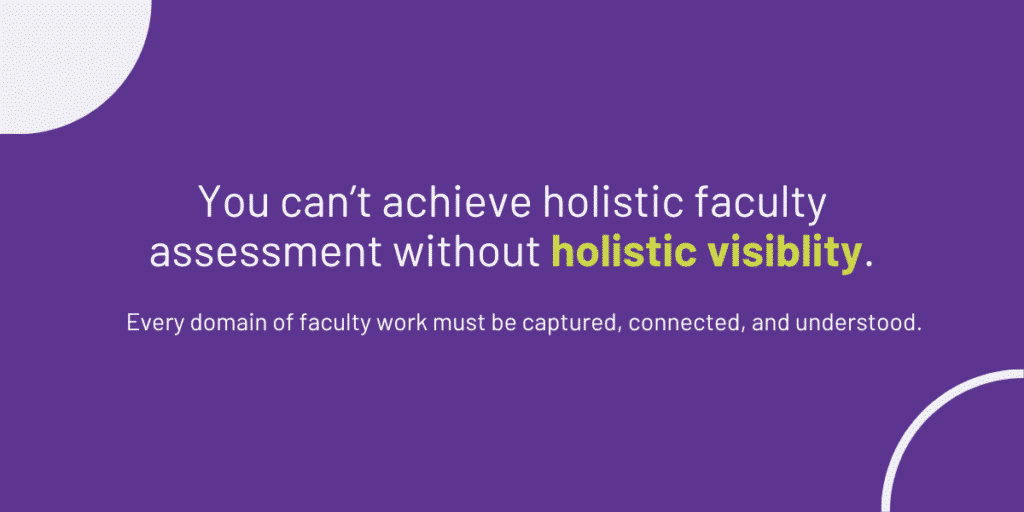

Holistic evaluation has become the standard for medical school faculty assessment. Achieving it requires clear, reliable visibility into the full scope of faculty contributions, supported by structured, centralized data.

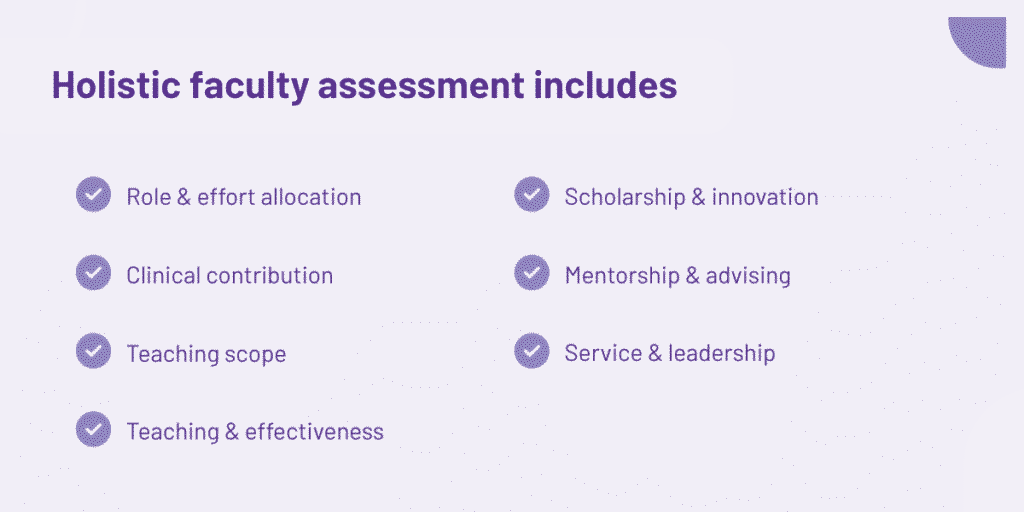

What holistic faculty assessment actually requires

A defensible approach to medical school faculty assessment depends on structured data across clearly defined areas of work. Publications and clinical RVUs alone don’t capture the full scope of academic medicine.

Here are seven core data areas that every medical school should track.

1. Role-aligned effort allocation and performance expectations

Faculty rarely share identical responsibilities. Some are research-heavy. Others focus on clinical care. Many divide their time across teaching, scholarship, and service.

Holistic faculty assessment begins with documented effort allocation, including:

- Appointment track and rank

- Percent effort by mission area (clinical, research, teaching, service)

- Role-specific expectations

Without structured documentation of role expectations, assessment and evaluation of faculty performance becomes subjective. Faculty may be compared against standards that don’t match their actual responsibilities. Clear, role-aligned documentation helps ensure evaluations are grounded in the right benchmarks.

2. Clinical contribution within an academic context (for clinically active faculty)

For clinically active faculty, productivity metrics alone don’t reflect academic impact.

Medical schools should contextualize:

- Clinical workload

- Quality measures

- Patient outcomes

- Leadership within care teams

- Contributions to clinical innovation

Holistic medical school faculty assessment recognizes that clinical excellence contributes directly to educational quality, research collaboration, and institutional reputation.

When clinical data sits outside academic reporting systems, leadership is left with an incomplete picture of faculty performance.

3. Comprehensive teaching scope and workload

Teaching in medical education extends far beyond scheduled lectures.

Institutions should capture:

- Course and clerkship leadership

- Small-group facilitation

- Clinical supervision

- Curriculum development

- Simulation training

- Interprofessional education

Much of this work happens outside traditional course structures and can go unrecorded.

Without centralized tracking, tenure and promotion evaluation committees may underestimate teaching contributions, particularly for clinician educators. Comprehensive teaching documentation strengthens institutional insight into instructional capacity.

4. Evidence of educational effectiveness and learner impact

Teaching volume is not the same as teaching quality.

Holistic faculty assessment should include structured evidence such as:

- Course evaluations

- Peer review of teaching

- Learner performance data

- Board pass rates linked to instructional units

- Adoption of educational innovations

When this information is fragmented, it’s difficult to connect effort with outcomes. Structured integration enables a more credible assessment and evaluation of faculty performance, especially for teaching-focused faculty.

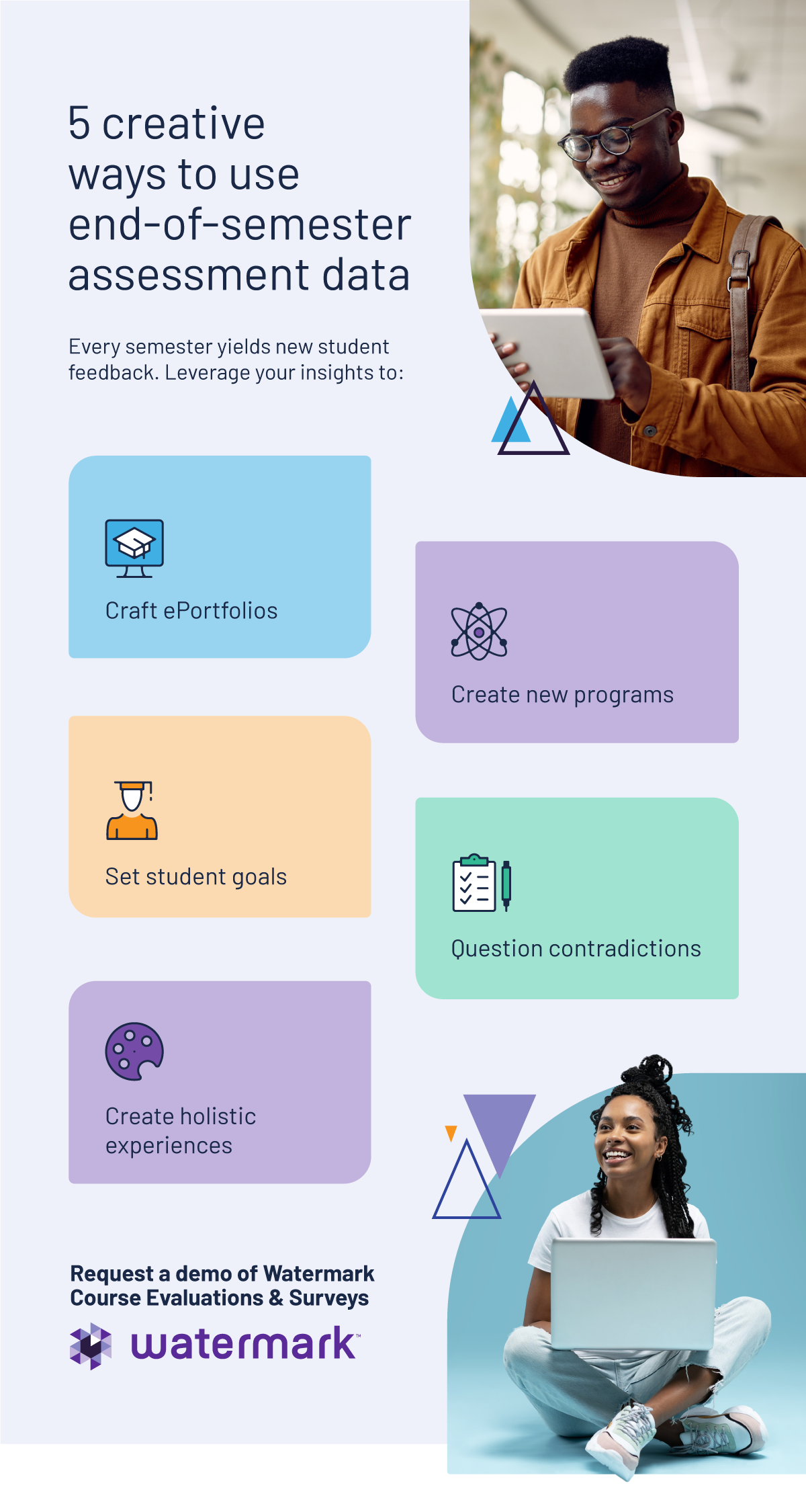

Institutions that successfully move from collecting evaluations to leveraging them strategically often see stronger outcomes.

5. Scholarship and innovation

In academic medicine, scholarship takes many forms.

Tracking should include:

- Grants and funding

- Publications and citations

- Clinical trial involvement

- Translational research

- Educational research

- Community-engaged scholarship

- Intellectual property and innovation

A broad definition prevents certain tracks from being overlooked. Holistic medical school faculty assessments that rely solely on traditional bibliometrics risk overlooking the contributions of clinician educators and community-focused faculty.

6. Mentorship, advising, and talent development contributions

Mentorship is central to institutional continuity, yet it is often undercounted.

Medical schools should document:

- Advising roles

- Residency and fellowship mentorship

- Research supervision

- Faculty mentoring

- Pipeline program participation

These efforts shape learner success and faculty development but are frequently underrepresented in tenure and promotion evaluation processes. Structured tracking makes this work visible.

7. Institutional service, leadership, and mission-driven contributions

Committee work, governance, and accreditation support are essential to fulfilling the mission.

Institutions should capture:

- Committee membership and leadership

- Accreditation roles

- Strategic planning roles

- Community engagement

Without centralized documentation, service can become “hidden labor,” unevenly distributed and insufficiently recognized.

Holistic faculty assessment must reflect these contributions to ensure balanced workload recognition and institutional transparency.

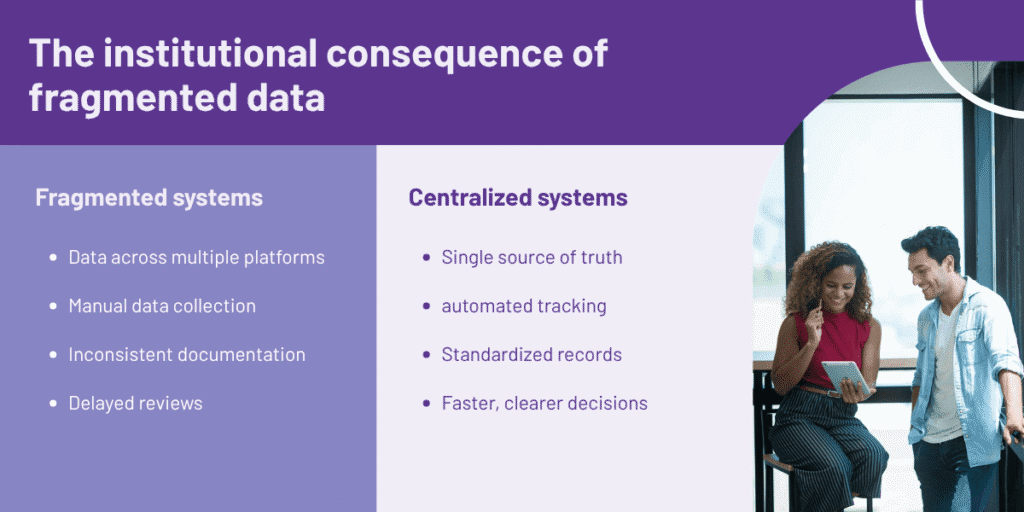

The institutional consequence of fragmented data

When faculty information is spread across HR systems, clinical dashboards, learning management platforms, and research databases, leaders don’t have a clear, unified view of performance.

Fragmentation often results in:

- Delays in promotion and tenure reviews

- Last-minute data pulls during accreditation

- Varying documentation standards across departments

- Heavier administrative workload

- Faculty frustration with the process

Over time, confidence in assessment and evaluation of faculty performance starts to erode. Many institutions are also trying to better link documentation to continuous improvement efforts. But that’s difficult when data lives in separate systems that don’t talk to each other.

Ultimately, the expectations of the modern university have outpaced the capabilities of fragmented data. Accrediting bodies demand reliability, promotion committees require consistency, and faculty deserve fairness. Without a centralized infrastructure, holistic evaluation remains an aspirational goal rather than an institutional reality.

Moving from data collection to strategic oversight

Simply collecting information isn’t enough. Leadership teams need to be able to:

- See performance across all mission areas in one place

- Evaluate faculty against clearly defined role expectations

- Deliver accreditation documentation without delays

- Monitor workload distribution across departments

- Spot emerging leaders and identify mentorship gaps

True medical school faculty assessment means shifting from reactive data gathering to ongoing, structured oversight. When information is centralized and organized:

- Faculty performance conversations become more objective

- Tenure and promotion evaluation workflows accelerate

- Accreditation reporting is easier to support with documentation

- Strategic decisions are informed by reliable data

Strong infrastructure supports consistency. Clear visibility supports better leadership decisions.

Conclusion: Holistic assessment requires holistic visibility.

Medical schools cannot claim holistic faculty assessment without a centralized data foundation. When key domains, such as clinical contribution, teaching scope, learner impact, scholarship, mentorship, and service, are siloed, the story of faculty impact remains incomplete.

Each domain tells part of the story. Together, they enable a fair, defensible, and mission-aligned assessment and evaluation of faculty performance. By centralizing this infrastructure, leaders gain clarity and confidence in their approach to recognizing and rewarding their faculty. The question isn’t whether holistic assessment matters; it’s whether your current systems are standing in its way.

See how your faculty data infrastructure compares. Schedule a meeting with Watermark to learn more.