Artificial intelligence is no longer a future concept in higher education; it’s already reshaping how institutions teach, measure learning, and demonstrate effectiveness. Nowhere is this more evident than in assessment. When applied thoughtfully, AI in assessment can help colleges and universities strengthen evidence of learning, reduce administrative burden, and support continuous improvement at scale.

For leaders navigating accountability pressures, resource constraints, and rising expectations for outcomes, AI in assessment for higher education offers a new path forward, one that enhances, rather than replaces, human expertise.

What AI-enhanced assessment actually means

AI-enhanced assessment refers to the responsible use of artificial intelligence to support assessment design, data analysis, and decision-making. In practice, this can include:

- Helping faculty draft learning outcomes, rubrics, or survey questions

- Analyzing large volumes of assessment data to surface trends and gaps

- Supporting consistency and alignment across programs and modalities

- Streamlining reporting for accreditation and institutional planning

Importantly, AI in college assessments is not about automating judgment or replacing faculty insight. Instead, it augments human decision-making, handling repetitive, time-intensive tasks so educators and leaders can focus on interpretation, improvement, and student impact.

Why AI-enhanced assessment matters more than ever

Higher education is operating in a moment of heightened scrutiny and complexity. Institutions are being asked to do more with less, while also providing clearer evidence of learning and workforce relevance.

AI in assessment for education matters now because they help institutions:

- Keep pace with growing data volume and complexity

- Improve the consistency and quality of assessment practices

- Respond faster to emerging gaps in student learning

- Build stronger, more defensible accreditation narratives

- Reduce workload burden and save time

- Encourage participation in assessment

At a time when fatigue is real, AI can help reframe assessment as a meaningful, actionable practice, rather than a compliance exercise.

The current assessment landscape: Challenges institutions face

Before exploring modern assessment solutions, it’s important to acknowledge the realities many institutions face today:

- Fragmented data across systems, departments, and programs

- Manual processes that consume valuable faculty and staff time

- Inconsistent assessment practices across sections and modalities

- Difficulty translating data into insight leaders can act on

- Last-minute accreditation scrambles driven by disconnected evidence

These challenges limit the value of assessment and increase burnout. AI, when applied strategically, can help address these pain points, but only if institutions start with clarity and purpose.

How to implement AI-enhanced assessment at your institution

Step 1: Clarify your goals for AI-enhanced assessment

Begin by defining what success looks like within a cohesive AI governance framework. Are you aiming to improve assessment quality? Reduce reporting burden? Strengthen evidence for accreditation?

This is also the stage where institutions should define AI governance guardrails, clarifying oversight responsibilities, acceptable-use parameters, documentation standards, and alignment with compliance expectations. The strongest implementations tie AI use directly to institutional priorities, formal governance protocols, and student outcomes.

Step 2: Identify where AI can add the most value

Not every assessment task benefits equally from AI. High-impact use cases often include:

- Drafting or refining learning outcomes and rubrics

- Analyzing trends across courses or programs

- Identifying gaps or inconsistencies in assessment evidence

- Summarizing large data sets for leadership review

These are areas where AI tools for assessment can save time while improving clarity and consistency – provided institutions clearly define which tools are approved, establish guardrails for responsible use, and ensure alignment with data privacy and governance policies. Some may also rely on vetted vendors with established AI governance frameworks to help manage risk.

Step 3: Prepare your data for AI

AI is only as effective as the data it works with. Governance standards should dictate how assessment data is:

- Well-organized and structured

- Consistently labeled and aligned to outcomes

- Accessible across departments and programs while maintaining strict data privacy and security.

Preparing data isn’t glamorous, but it’s foundational. Clean, connected data enables AI to generate insights leaders can trust.

Step 4: Start with a pilot project

Rather than launching institution-wide, begin with a focused pilot, such as a single program, assessment cycle, or reporting workflow. Pilots provide a controlled environment to test your new AI policies and governance hurdles before scaling. They also help build confidence and buy-in among faculty and staff.

Step 5: Build institutional AI literacy

Successful adoption depends on people, not just technology. Training should focus heavily on your institution’s specific AI policies, helping faculty and staff understand:

- What AI can and cannot do

- How AI supports (not replaces) professional judgment

- How AI outputs should be interpreted and validated

AI literacy fosters trust and ensures it’s used as a tool for improvement, not a black box that bypasses institutional standards.

Step 6: Ensure human oversight and continuous monitoring

AI-enhanced assessment must always include human review. Faculty and administrators remain responsible for interpreting results, making decisions. This oversight must extend beyond simply checking the final output. For leadership to trust these findings, they need to see the logic and specific data points the AI used to reach its conclusions. Understanding this rationale ensures the AI’s “thinking” aligns with the actual context of your campus.

Continuous monitoring safeguards that both the AI outputs, and the reasoning behind them stay accurate, unbiased, and aligned with institutional values as the technology evolves.

Ethical, privacy, and compliance considerations

With opportunity comes responsibility. Institutions must address key considerations when implementing AI in assessment for higher education, including:

- Data privacy and security, especially for student information

- Transparency around how AI is used and how outputs are generated

- Bias mitigation, ensuring AI does not reinforce inequities

- Alignment with accreditation and regulatory expectations

Ethical AI use builds trust with faculty, students, and external stakeholders alike.

Real-world examples of AI-enhanced assessment in action

Across higher education, institutions are beginning to apply AI in practical, assessment-focused ways, such as:

- Identifying patterns in learning outcomes across programs to guide curriculum improvement

- Supporting more consistent rubric language across multi-section courses

- Accelerating the preparation of assessment reports for accreditation

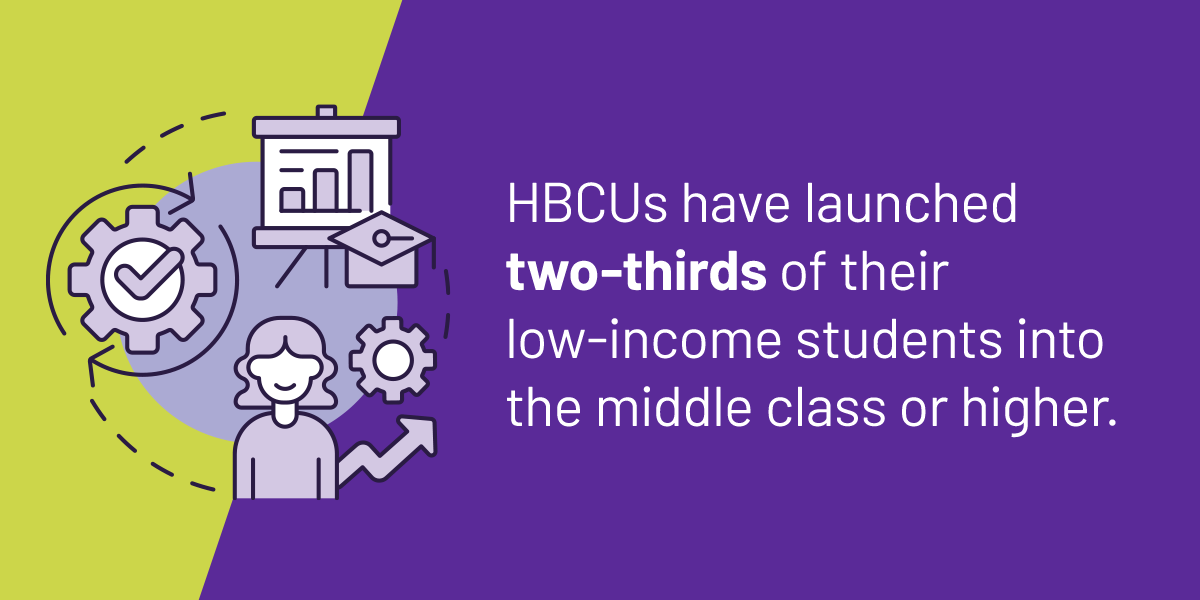

- Highlighting equity gaps in student learning data that warrant deeper review

These examples demonstrate how AI enhances visibility and insight. All without removing human judgment from the process.

How Watermark supports AI-enhanced assessment

Watermark’s approach to AI is grounded in a clear philosophy: technology should strengthen assessment, not complicate it. Rather than a one-size-fits-all application, Watermark integrates AI where it can most effectively accelerate, orchestrate, and illuminate the work of faculty and administrators. Plus, the AI-powered features are optional – institutions have control over whether and which units have access to them.

Within the Watermark ecosystem, these AI-driven capabilities allow institutions to:

- Accelerate the process: Use inline AI to cut through workflow friction by parsing data and summarizing evidence. By simplifying these time-consuming processes, you can focus on high-impact work.

- Orchestrate complex workflows: Reduce administrative cycles by using AI to help manage action plans and follow-ups. With human oversight at every step, this focus removes the repetitive tasks that often stall institutional progress

- Illuminate the path forward: Unify disconnected data to surface trends, identify risks, and recommend the most effective next steps for all roles. This provides the meaning behind the data, leading to better decisions and stronger alignment between outcomes and actual improvement.

A primary example of this philosophy in action is Instructor Insights, a feature within Watermark Course Evaluations & Surveys. It uses AI to synthesize student feedback, helping faculty quickly identify themes and actionable takeaways that fuel professional growth.

By embedding these specific tools into established workflows, Watermark helps institutions realize the practical benefits of AI while maintaining clarity, trust, and human control.

Getting started: A practical checklist

For leaders ready to explore AI-enhanced assessment, consider these starting points:

- Don’t skip the governance step. Establish your usage guidelines and privacy standards early so everyone knows what success and safety looks like.

- Identify high-value assessment workflows for AI support

- Ensure assessment data is organized and accessible

- Pilot AI use in a focused, low-risk area

- Invest in AI literacy and shared understanding

- Establish guidelines for oversight, ethics, and review

Small, intentional steps build momentum and confidence.

AI-enhanced assessment represents a meaningful shift in how higher education understands and improves learning. When used thoughtfully, AI in assessment can reduce burden, increase insight, and strengthen institutional effectiveness. It can do this without sacrificing academic integrity or human expertise.

The opportunity is not to adopt AI for its own sake, but to apply it where it matters most: improving learning and telling a clearer, more compelling story of impact.

With the right strategy, AI in institutional assessments becomes not just a technology upgrade, but a catalyst for better decisions and better outcomes. To learn more, schedule a demo now.