According to a recent Gallup poll, Americans’ confidence in higher education is at an all-time low — and while many different factors contribute to this issue, a lack of institutional data transparency is one of the most significant.

Achieving data transparency can help your institution regain stakeholder trust, improve the student experience, and maintain its competitiveness in the higher education landscape. But there are several key steps you’ll need to take to reach this goal.

What is data transparency?

Data transparency refers to the practice of making sure your institution’s data is accessible, understandable, and readily available to stakeholders who have a legitimate interest in it. It involves maintaining a culture of openness about where that data comes from and how you use it.

In the business world, for example, customers expect businesses to disclose their data practices. Now that most institutions of higher education are collecting more data as part of digital transformation efforts, students and other key stakeholders are beginning to express similar sentiments.

Obstacles to data transparency in higher education

Some of the most common obstacles institutions face in achieving data transparency include:

- Data silos: Much of your institution’s data becomes inaccessible when departments use disparate storage and management systems, which creates a fragmented view of your institution.

- Data quality: Siloed and inaccessible data can make data governance and management more difficult, potentially allowing errors and outdated information to impact your decision-making processes.

- Amount of data: Higher education institutions collect vast amounts of data, which can overload outdated digital infrastructure.

- Change resistance: Often, achieving data transparency requires a cultural shift within your institution — and as with any change, you’re likely to experience some degree of resistance from internal stakeholders that can make achieving your goals significantly more challenging.

Proper planning and clear, open communication is essential for overcoming these challenges. For example, explaining the benefits of data transparency to institutional leadership can help you gain their approval, which can make getting buy-in from the rest of your institution easier.

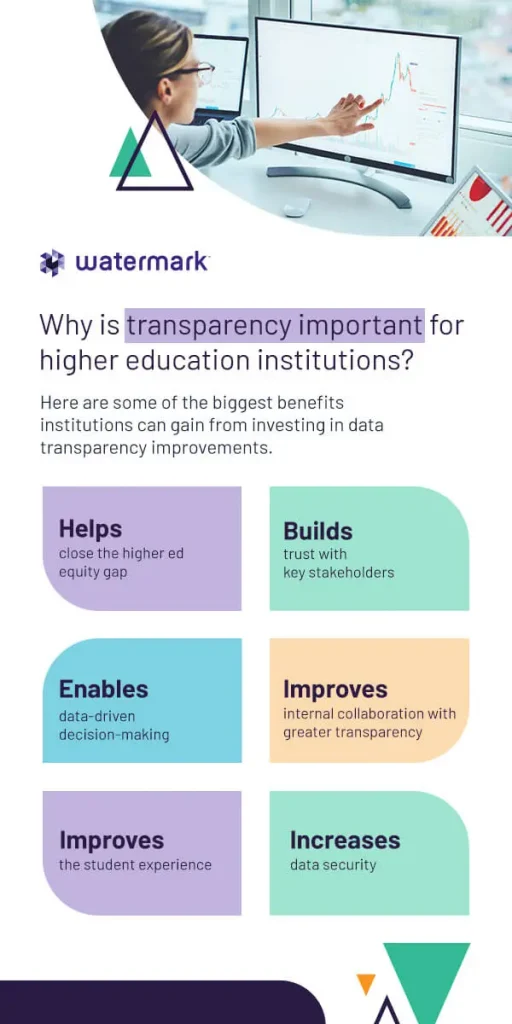

Why is transparency important for higher education institutions?

It’s easy to understate the importance of data transparency for higher ed. In fact, it’s critical for ensuring better outcomes moving forward. Here are some of the biggest benefits institutions can gain from investing in data transparency improvements.

Helps close the higher ed equity gap

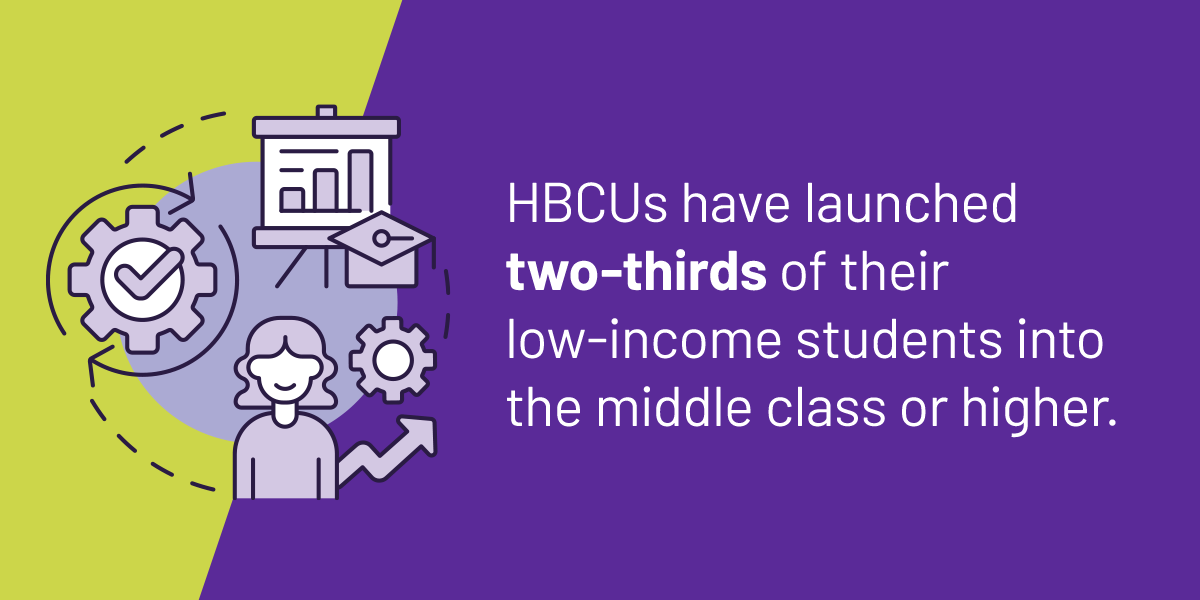

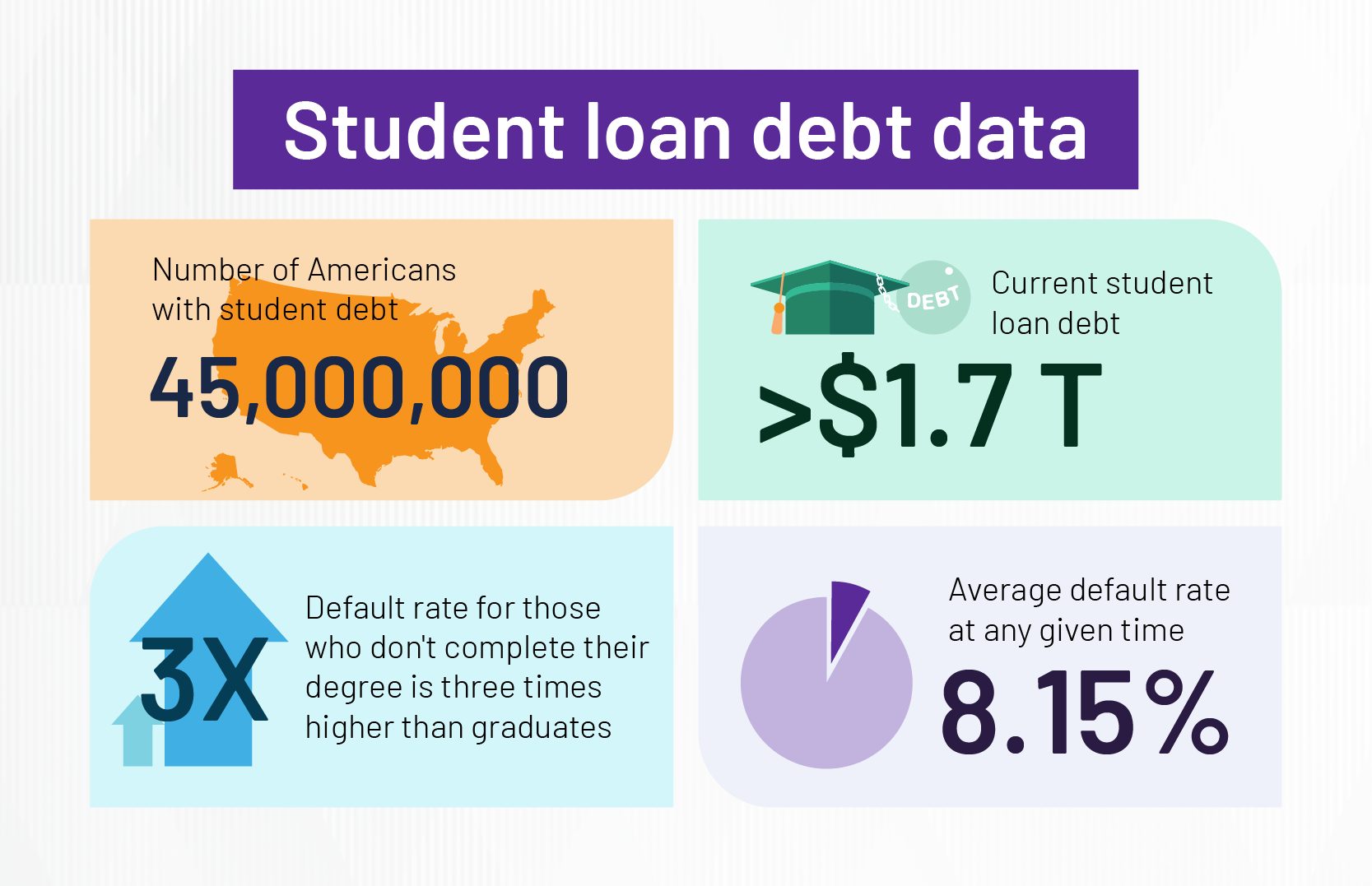

Although earning a degree or other credential from a higher education institution is one of the most reliable ways for students to improve their socioeconomic outcomes, many students face challenges that can prevent them from completing their education.

Data transparency can help your institution close critical equity gaps in education access and student success by making your data usable. You can then use that data to:

- Predict which students are most at risk of dropping out.

- Give students more agency over their educational journeys.

- Facilitate smoother delivery of student support services.

- Improve programs and course offerings across various disciplines.

- Identify new opportunities for financial aid and scholarships.

- Build a more diverse student body.

- Investing in a robust student success software solution can help make your data more transparent by creating a centralized, cloud-based hub for streamlined storage and management.

Builds trust with key stakeholders

Higher education is a significant investment of time and resources for internal and external stakeholders. Providing open access to your data and being honest about your practices can help you maintain positive relationships with:

- Students: Enabling easy access to educational records and being open about your data collection practices are essential steps for improving the student experience. This transparency eliminates some of the barriers to accessing services, which can help you boost student satisfaction, engagement, and successful outcomes.

- Family members: Providing easier access to information about your institution’s finances and student outcomes can help parents and other family members feel more engaged with your institution, which can, in turn, improve student success metrics like retention rates, graduation rates, and time to completion.

- Alumni: Your graduates have invested significant time and money into attending your institution, and providing data that shows your commitment to achieving your mission and benefiting the greater community can help you boost alumni engagement and potentially increase donations.

- Donors: To the people who fund your institution, data transparency provides necessary evidence that their donations are going to a worthy cause. Thorough reports of your progress toward key institutional goals can help keep donors engaged.

Improves internal collaboration with greater transparency

Collaboration is essential for continuous improvement and innovation in higher education, and having an accurate inventory of all your institutional data can help facilitate better collaborative work among colleagues.

Improving data transparency creates a culture of trust and accountability among administrators, faculty, and other staff by providing greater visibility into the impact each group has on your institutional goals. This cultural shift can:

- Build stronger relationships between faculty and staff.

- Encourage greater collaboration among internal stakeholders.

- Better align departmental decisions to overarching goals.

- Spark innovation in faculty research.

Additionally, a cloud-based data storage and management platform can help you ensure all collaborators are on the same page for continuous progress.

Enables data-driven decision-making

Data-driven decision-making (DDDM) is more than a business buzzword — it’s a necessity for improving outcomes at your institution. And although higher education institutions are swimming in data, a lack of transparency limits your ability to use that data to your advantage.

When your institution invests in transparency, you create a seamless flow of data across your institution. That access enables your decision-makers to generate accurate insights on demand for more effective, efficient decisions.

Improves the student experience

Student data transparency is essential for student success. More access to their data helps students make better decisions regarding their educational journeys, from planning which classes they’ll take to exploring different career paths.

For example, a lack of data transparency at Fayetteville Technical Community College (FTCC) made many students ineligible for course enrollment. Without easy access to their educational records, students were often unaware that they lacked the required prerequisites to enroll in certain courses until well into the registration period — which impacted their chances of completing their programs on time.

Implementing an integrated software solution that opened visibility into student data across the institution helped provide increased flexibility to FTCC students, helping boost retention and graduation rates for students across various populations.

Increases data security

All higher education institutions that receive federal funding from the U.S. Department of Education must comply with the Family Education Rights and Privacy Act (FERPA) of 1974. This law prohibits institutions from disclosing student education records or other personally identifiable information (PII) unless:

- The student provides written consent.

- The disclosure is basic directory information.

- The disclosure meets the criteria outlined in FERPA 34 CFR Section 99.31.

Data transparency can help you improve your FERPA compliance management by creating a clear trail for audits. With the right data management and analytics tools, you can help auditors quickly evaluate your system and data usage by providing a clear paper trail of all your collected data and disclosures.

6 tips for improving higher education data transparency

Knowing why data transparency is important is the first step, but your institution must put it into practice to gain the benefits listed here. Here are some actionable tips you can use to begin working toward data transparency at your institution.

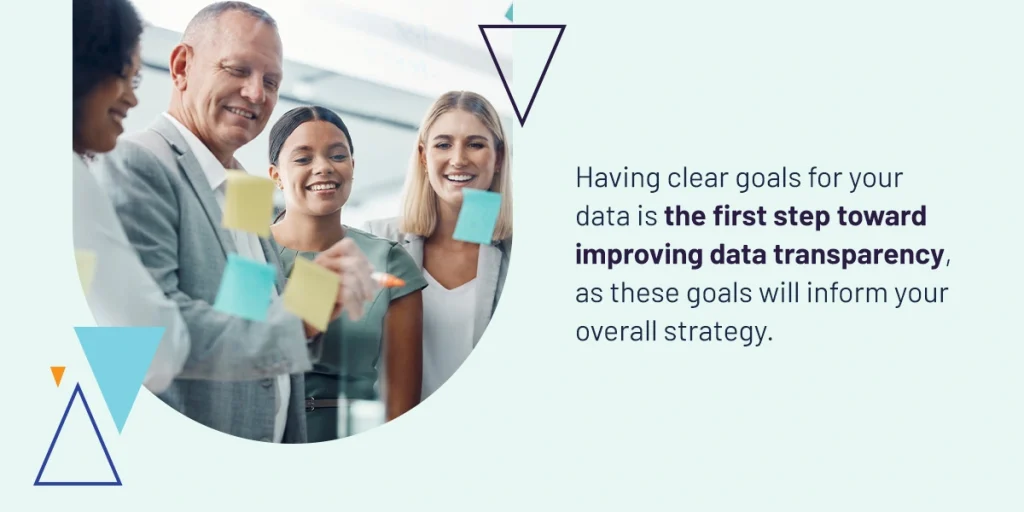

1. Set and report achievable data goals

Having clear goals for your data is the first step toward improving data transparency, as these goals will inform your overall strategy.

For example, do you plan on using demographic data to improve student recruitment efforts? Or would you analyze assessment scores and student feedback over time to determine an instructor’s effectiveness as a lecturer? The goals you set will determine:

- What types of data you need to collect.

- How you will collect that data.

- What techniques you will use to process that data.

- How you will act on the insights you gain.

- When you will update stakeholders on progress.

Essentially, you need to clearly explain to your stakeholders what data your institution will collect from them and how you plan to use it. This transparency will help build trust with your data sources, which can make them more willing to share their data with you in the future.

2. Create a data transparency policy

Your institutional leadership should establish a clear, straightforward data transparency policy and communicate it to all faculty, staff, and other stakeholders.

Key items to include in your policy include:

- Data collection practices: Disclose to students and other stakeholders what data your institution collects from them, and provide a clear explanation of the collection techniques you use.

- Usage guidelines: Your policy should outline what your institution does with the data it collects and how this usage benefits key stakeholders.

- Compliance measures: List and define the data privacy and security regulations that apply to your institution as well as the measures you take to ensure compliance.

Make sure your policy is documented and easily accessible to everyone in your institution, including students, faculty, administrators, and other staff. Being able to easily reference this document can help make it easier to maintain their trust by giving them a clear means of holding your institution accountable.

3. Improve data management practices

While you may think making your data more accessible would open your institution up to cyber threats, increased transparency can actually help you mitigate your security risk — as long as you take the proper care of your data and infrastructure.

Effective data management strategies, which are critical for achieving data transparency, help you take inventory of all your data and track its usage. Here are a few reasons why:

- Improved accuracy: Following data management best practices helps remove errors and outdated data points from your system, enabling better analysis and decision-making.

- Data discovery: Regularly managing your data can help your institution improve system visibility and uncover the right data sets for research, program implementation, and reporting.

- Streamlined compliance: Data management helps protect your institution from costly noncompliance penalties by ensuring your data is always current, accurate, and secure.

4. Follow data security best practices

Investing time and resources into data security initiatives is essential for more than compliance with FERPA and other important data regulations — it’s also critical for maintaining stakeholder trust and achieving your overarching mission.

Some examples of data security best practices you can implement at your institution include:

- Security awareness training: Did you know the majority of data breaches involve the human element? Training faculty and staff to recognize and respond to cyber threats can reduce your risk of a breach and instill greater confidence in key stakeholders.

- Third-party cybersecurity services: Outsourcing cybersecurity tasks to a reliable managed service provider (MSP) can help your institution mitigate risk while freeing up your IT department to handle other tasks.

- Identity and access management (IAM): IAM capabilities like multi-factor authentication (MFA) and zero-trust system architecture prevent unauthorized users from gaining access to your data, keeping your students and faculty safe.

5. Build a data-driven culture

Cultural acceptance is critical for making any change in your institution’s data collection strategies, including efforts to improve transparency.

If your institution is lagging behind in digital transformation, a fully data-driven culture can be challenging to implement. However, these tips can help make navigating this shift a little easier:

- Lead by example: Cultural changes tend to start at the top of an organization and trickle down over time, which is why it’s critical to get buy-in from institutional leadership as soon as possible.

- Improve data literacy: Providing training sessions and workshops on data literacy concepts, such as statistics, interpretation, and visualization can democratize access to your institutional data, which allows more stakeholders to inform their decisions.

- Encourage feedback: Open feedback is essential for improving transparency within your institution, as stakeholder input can help you understand where your areas for improvement lie.

6. Invest in the right data analytics tools

When you have the right tools at your disposal, you’re more likely to get the results you need. Some important features to look for in a higher ed data analytics solution include:

- Predictive analytics: An AI-powered analytics solution that evaluates historical data to identify trends is a powerful tool for proactive, informed decision-making. For example, a system that can identify and alert staff of changes in student behavior can help your institution re-engage at-risk students before it’s too late.

- Advanced reporting: Real-time reporting capabilities provide accurate insights into your institution at the moment you need them. Intuitive, interactive dashboards and rich visualizations can help bring all your stakeholders on the same page for more effective collaboration and unified decision-making.

- Integrations: A fully integrated solution with connections to leading educational software programs creates a single source of truth for your entire institution, enabling visibility into all your data for streamlined analytics and reporting. It can also eliminate the need to maintain many disparate solutions, enabling you to consolidate your tech stack and reduce technology costs.

Commit to data transparency with Watermark

To harness the full power of your institution’s data, you need the right software tools. That’s what we provide at Watermark. Our advanced data management and analytics tools help higher education institutions nationwide improve student success metrics and profitability and leverage the data you already collect.

Deep integrations between every solution with Watermark Educational Impact Suite (EIS) and leading learning platforms enable you to quickly pull data from across your institution for easy analysis. And powerful reporting capabilities generate rich visualizations that make your insights accessible to all stakeholders for more informed decision-making.

Request your free demo today to see how Watermark can help your institution commit to data transparency.