The conversation has shifted from what AI can do to how it’s scaling institutional impact. For many colleges and universities, it’s now a permanent part of the academic tech stack. From predictive analytics to automated workflows and content generation, AI in higher education is increasingly a part of everyday institutional operations.

Adopting AI is not just adding another tool. Each platform, integration, and dataset increases complexity within an already crowded ecosystem. The challenge is not whether to adopt AI, but how to do so in a way that is sustainable, secure, and strategically aligned.

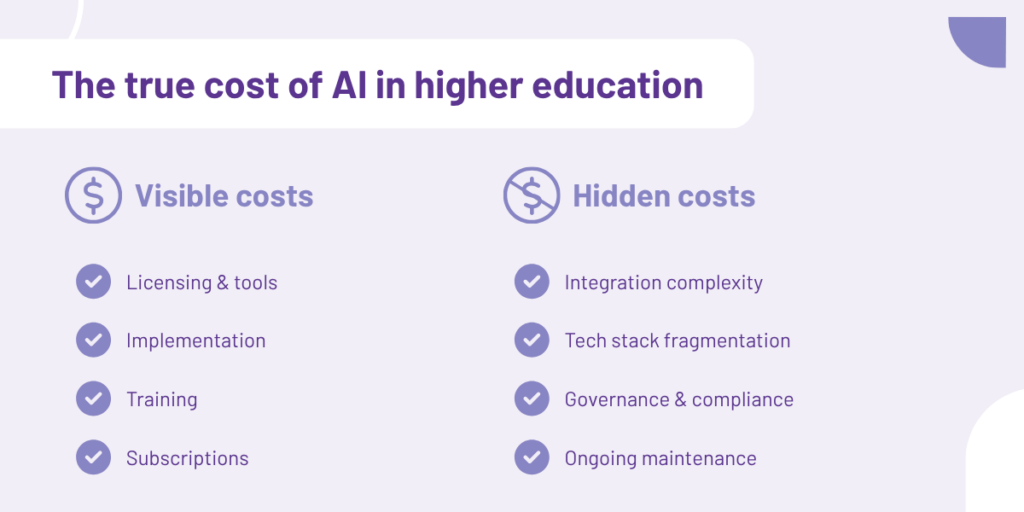

Understanding the true cost of AI in education requires looking beyond licensing to operational overhead, governance, integration, and long-term architectural decisions.

The visible cost of AI adoption

When institutions evaluate new AI capabilities, the first costs that typically come into focus are the most obvious ones.

Upfront investment

Initial adoption often includes licensing, implementation support, integration, and training. While these costs may deliver value, they represent only part of the total investment.

Ongoing operational costs

After deployment, institutions must account for:

- Subscriptions and renewals

- Maintenance and technical support

- Model updates or retraining

- Compliance oversight

- Ongoing training and adoption

Over time, these operational expenses accumulate across departments and platforms, making it increasingly difficult to track the true cost of AI in education across the institution.

The hidden cost: Tech stack fragmentation

Beyond direct costs, AI can increase system complexity.

Most institutions already manage multiple systems for learning, assessment, analytics, and reporting. Each new AI tool adds dependencies across this ecosystem. Without coordination, even one tool can introduce ripple effects that increase risk and administrative burden.

The growing problem of tool sprawl

Many institutions already manage dozens of platforms. Adding AI can lead to overlapping functionality, redundant workflows, and inconsistent data, making systems harder to govern and maintain.

When AI multiplies integration points

AI relies on data from multiple systems. Each new capability introduces integrations and data pipelines that must be managed and secured. Over time, this creates a dense web of dependencies where small changes can have widespread impact.

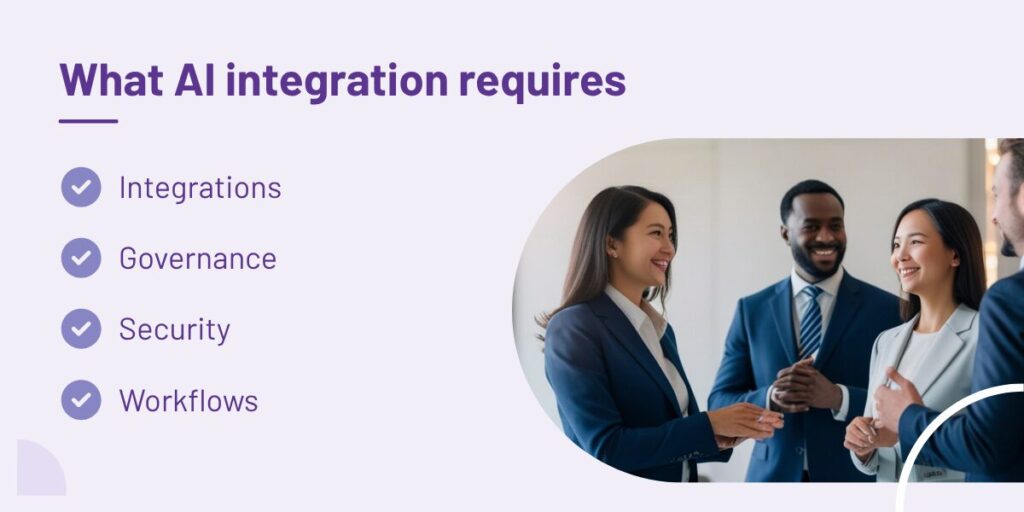

Integration is not a plug-and-play exercise

AI tools are often positioned as easy add-ons, but integration in higher education is rarely simple.

Institutional data environments are complex. Systems may have been implemented years apart, use different data standards, or rely on custom configurations that require careful coordination when introducing new technologies.

Effective implementation of generative AI in higher education requires thoughtful planning across multiple areas:

- Data architecture and interoperability

- Security and compliance policies

- Identity and access management

- Workflow integration across departments

- Governance for model outputs and usage

Without this, institutions risk spending more time managing integrations than realizing value.

Who controls the intelligence? Data governance in the age of AI

AI introduces new governance responsibilities. These systems actively use institutional data to generate insights that may influence decisions.

Unlike traditional software features, AI systems actively consume data, identify patterns, and generate outputs that may influence decisions across teaching, operations, and leadership.

This raises important governance questions:

- What data is being used?

- Who oversees how it is applied?

- How are outputs validated?

- How are privacy and compliance maintained?

These issues become particularly important as generative AI in higher education expands into areas such as learning support, assessment analysis, and institutional reporting. A robust governance framework ensures AI capabilities enhance institutional decision-making rather than introducing new sources of risk. AI capabilities enhance institutional decision-making rather than introducing new sources of risk.

The cost of waiting and the cost of adopting AI without a strategy

While the risks of uncoordinated adoption are high, the cost of waiting is equally significant: institutions that delay AI integration risk falling behind in operational efficiency and failing to meet the evolving expectations of tech-savvy students and faculty.

However, layering tools onto an already complex tech stack without a roadmap can lead to fragmented data, duplicated functionality, and integration challenges. The most effective path forward is not simply adopting more tools, but implementing AI within a coherent institutional architecture that delivers measurable education AI implementation ROI while maintaining governance and operational stability.

A smarter approach: AI within a unified ecosystem

Many institutions are shifting toward consolidation rather than expansion.

Embedding AI within a unified ecosystem allows institutions to:

- Reduce duplicate systems

- Centralize governance

- Simplify integrations

- Improve visibility across operations

- Scale more sustainably

When AI is implemented as part of a broader technology strategy, institutions can focus less on managing tools and more on generating meaningful insights.

Ultimately, the goal is not to find the best AI for higher education institutions to save costs in isolation. The real objective is building a cohesive ecosystem where AI capabilities support institutional priorities without adding unnecessary complexity.

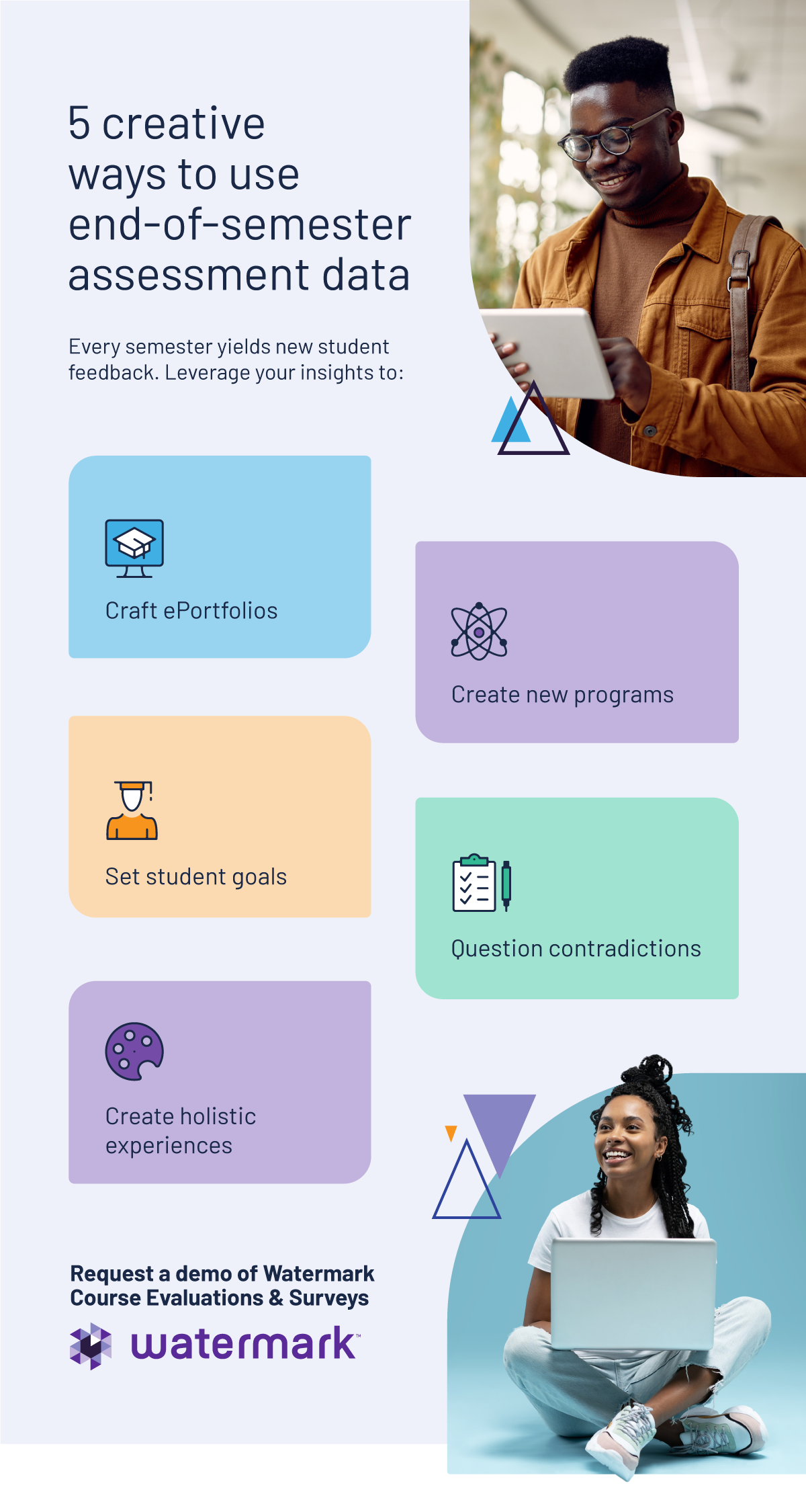

AI-powered solutions designed specifically for higher education, such as Watermark’s, allow institutions to integrate AI capabilities directly into the systems they already rely on for assessment, faculty activity reporting, accreditation support, and institutional effectiveness.

AI is an architectural decision, not a feature decision

Artificial intelligence will continue to play an increasingly important role across teaching, assessment, and institutional operations.

But the success of AI in higher education will depend less on individual tools and more on how institutions design the architecture that supports them.

A strategic approach helps reduce fragmentation, strengthen governance, and support long-term institutional value.To learn how Watermark supports responsible AI implementation within a connected ecosystem, explore our approach to AI in higher education. Or schedule a demo to see how Watermark can help your institution strategically implement AI while managing costs, complexity, and governance.